An outage at a market leading SaaS company is always noteworthy. Thousands of organizations and millions of users are so reliant on these services that a widespread outage feels as surprising and disruptive as a regional power outage. But the analogy with an electricity provider is unfair. While we expect a utility to be safe, boring and just plain reliable, we expect SaaS services to also innovate relentlessly. As we pointed out a while back, this is the crux of the problem. Although they employ some of the best engineers, sophisticated observability strategies and cutting-edge DevOps practices, SaaS companies also have to deal with ever accelerating change and growing complexity. And that occasionally defeats every effort to identify and resolve a problem quickly.

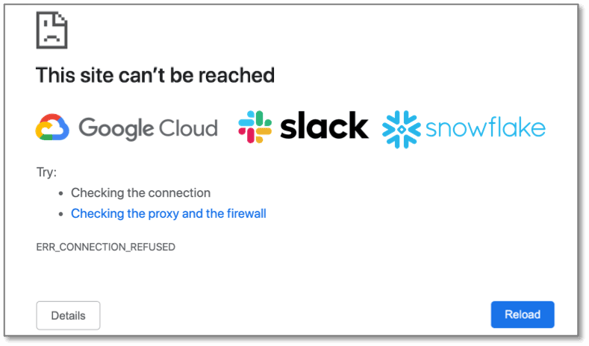

For example, Snowflake had a major outage six weeks ago. Users were unable to log into the UI or found it unresponsive for about 4 hours. The problem started because a web server running an older version of the OS ran out of disk space. After RCA was determined, alert rules were updated. But this is a classic illustration of why alert rules can’t possibly keep up with all new failure modes, and why you need ML to root cause such failure modes rapidly. This specific example is one that ML has repeatedly proven to handle easily (see here and here).

Another recent outage impacted users of GCP, Big Query, GKE and other Google services for almost an hour. The root cause was a quota bug introduced when Google switched to new quota management service, and the absence of adequate logic to catch this new failure mode.

Slack had another example – a 4 hour outage that started with network disruption (unusually high packet loss), but was really exacerbated when the provisioning service could not keep up with demand and started adding improperly configured servers to the fleet. To add insult to injury, the observability stack itself was unreachable due to the network disruptions. After RCA a swath of corrective actions were put in place, including new run books, new alert rules for network disruptions, design changes, a way to bypass the observability stack etc. But the crux of the issue remains - the provisioning service failure is another example of an unanticipated (new) failure mode that took a long time to resolve.

Slack had another example – a 4 hour outage that started with network disruption (unusually high packet loss), but was really exacerbated when the provisioning service could not keep up with demand and started adding improperly configured servers to the fleet. To add insult to injury, the observability stack itself was unreachable due to the network disruptions. After RCA a swath of corrective actions were put in place, including new run books, new alert rules for network disruptions, design changes, a way to bypass the observability stack etc. But the crux of the issue remains - the provisioning service failure is another example of an unanticipated (new) failure mode that took a long time to resolve.

Expecting humans to anticipate the unknown and figure out what happened was hard enough in the old days of simple monolithic architectures, monthly software releases, formal test plans and extensive QA. It is entirely unreasonable in the world of hundreds of intertwined microservices, multiple daily deployments and “test in production”. What is most interesting about the examples above, is that in each case the vendor quickly knew there was a problem, but it took a long time and a lot of hunting to figure out what caused the problem (the “root cause”). A growing number of organizations are now realizing that the only way to root cause these problems much faster (or even proactively detect them) is to employ ML to identify new failure modes and their root cause. ML in the observability domain has come a long way from the early attempts at anomaly detection and AIOps (read more about this here).